With the increasing use of cloud computing across the world, many companies that rely on it are adopting cloud cost awareness and optimization strategies to understand and manage the charges associated with their usage of different services.

In this blog post, we are going to share the best practices pertaining to AWS cost optimization and how we help our customers build cloud cost awareness. In simple terms, we treat cost as a first class KPI for any workload in the AWS Infrastructure just like response time, latency & throughput. This adds an additional dimension to each and every workload and therefore it gets reviewed and optimised in each phase of Software Development Lifecycle (SDLC).

Significance of Cloud Cost Awareness

Amazon Web Services (AWS) is extremely attractive when it comes to on-demand availability of services and the ability to deploy resources at the click of a button. And, because the AWS infrastructure is so flexible and scalable, the augmentation of an organization’s cloud instances or cloud presence - is almost inevitable. Most often, customers would only start working on the cost optimizations when they get surprises in their month end bills. This is when they realise that they need to start by recognizing the reasons for the unexpected increase.

Most common questions asked during this process are

- Have we launched new services or workloads?

- Have we scaled up a workload?

- Was there any architectural change in the infrastructure?

- Did someone launch new workloads for testing & forgot to hit pause?

Besides, AWS provides a plethora of options to choose from and in case you accidentally choose a service for the wrong use-case you might end up spending more. Therefore, understanding different cost levers for any service will help you build a robust & cost-efficient setup on AWS.

To tackle challenges like the ones mentioned above, cloud cost awareness becomes imperative.

Building your ecosystem of Cloud Cost Awareness

It is important to build a step-by-step plan to build an ecosystem which keeps all stakeholders aware of the costs being incurred. This plan serves as a tangible representation of the process, as well as a template for budgeting and management of costs. Some of the fundamentals that should be covered in this plan are as follows-

- Build an accountable chargeback mechanism: In this phase, first ensure that you have identified and implemented a tagging strategy that adds a business context to your usage. The tagging function allows you to define keys and values which can be used to categorize, filter, and sort resources. You can tag your resources based on Application Name, Environments, Owner, Project and Cost-Center. In case you have different requirements (or) use-cases feel free to add (or) modify your tags. It might take upto 48 to 72 hours to reflect tag related data in the cost dashboard, however, soon you will find yourself spending less time in digging to understand your costs and more time in making informed decisions to control your costs based on the rich data you will receive from your tag reports.

- Provide a mechanism to your engineering teams to drill down into the cost: You should provide some mechanism to the different teams which permits them to review the cost related to their resources & drill down themselves. For example,if there is a team who works on the search functionality of your front-end website, then they should have a breakdown of cost on the basis of different environments, different components & different AWS services consumed by their application.

- Ability to implement cost deviation alerts:

- This is a principal piece in the cost optimization exercise because this can help you identify the bugs/cost anomalies within days instead of in month end bills. The teams should be able to set up alerts based on the specific thresholds, cost deviations by a certain percentage.

- Having these alerts integrated into your slack channels can further improve visibility and response times from your engineers.

- AWS cost anomaly detection is a native tool that detects anomalies at a lower granularity and spend patterns and can roll-out individual alerts, daily or weekly summary.

- Ad-hoc measures: Post completion of the above phases, it is time you enforce additional processes to keep everyone answerable for their workloads costs

- Schedule monthly sync-ups with all the stakeholders & discuss cost trends for the last few weeks. Discuss any reasons for sudden increase/decrease in cost. Organizations that successfully manage their AWS spends usually have a clear expectation that everyone is responsible for costs. Just like any other operational metric performance, such as security, for example, each team should be required to meet cost objectives when building systems.

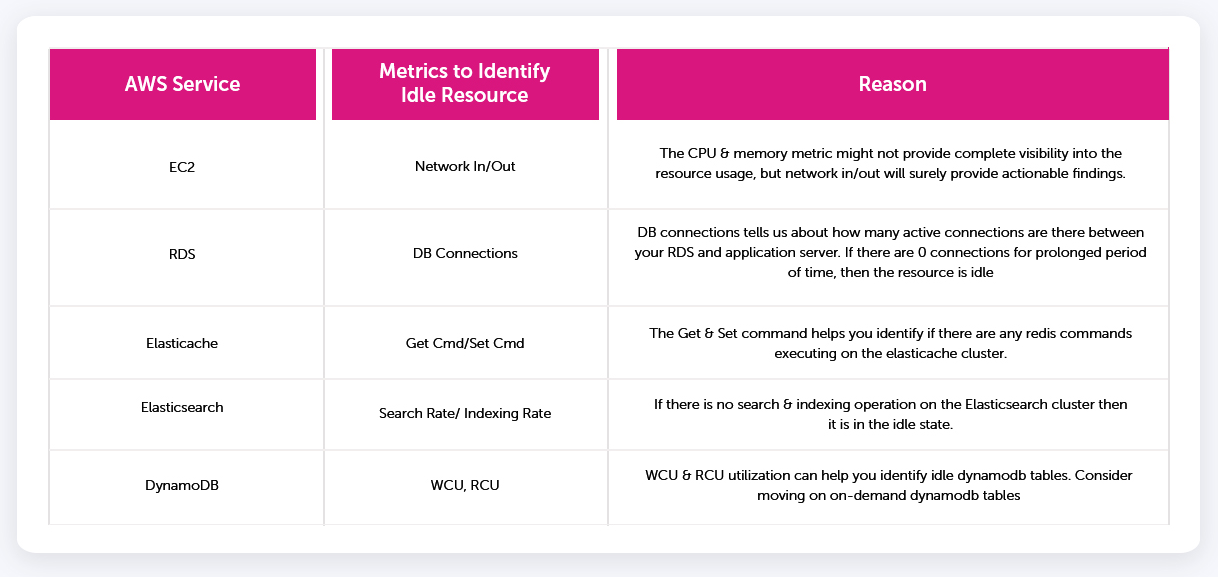

- The common mistake that teams generally make on the AWS cloud is that they launch workloads for testing purposes and then forget about them. This results in cost in-efficiencies and is the common reason for the cost increase. Habitually, engineering teams rely on the CPU & memory utilization metrics to identify the zombie resources. This might help in most of the cases, but you need some additional metrics to further improve your findings some of which are listed below:

Conclusion

After completing all the four steps, you will have a good mechanism to identify any cost anomalies and act on them in a timely manner. This system will help you gauge cost on different parameters & help you build cost aware teams. Cost optimization is an on-going process & should be made part of your existing DevOps processes.

Additionally, to make AWS cost more meaningful, customers map their AWS cost with different business metrics like orders per month, traffic served per month, transactions per month. Dividing these metrics with total AWS cost, gives them visibility into per transaction cost & helps them to compare their spendings against industry standards.

Great Blog. Thanks for Sharing.

The cost of cloud is one of the top concerns for companies that are still in the process of moving their applications and data to the cloud. One of the ways that organizations can ensure they are getting the most value out of their cloud investments is by using a cloud cost optimization approach. This approach includes understanding the cost drivers and how they interact with organization's business objectives. In order to help companies better understand and optimize their cloud costs, we have identified seven key considerations. Read more: https://www.ziffity.com/blog/prime-your-cloud-strategy-to-deliver-cost-savings-not-cost-headaches/

The cost of cloud is one of the top concerns for companies that are still in the process of moving their applications and data to the cloud. One of the ways that organizations can ensure they are getting the most value out of their cloud investments is by using a cloud cost optimization approach. This approach includes understanding the cost drivers and how they interact with organization's business objectives. In order to help companies better understand and optimize their cloud costs, we have identified seven key considerations

thanku and nice information.Based on a recent AWS Cloud Cost Optimization case study, a cloud migration to AWS (Amazon Web Services, Inc.) from a private cloud provider resulted in a total cost savings of $247,000 in just one year. The original infrastructure cost of the private cloud included $1.6 million in CapEx and $50,000 in Opex for the network, storage, and management. The optimized AWS migration provided an annual $1,003,000 in CapEx savings. The optimized annual Opex savings totaled $48,000, and included $41,000 in networking costs and $7,000 in storage costs. https://www.kellytechno.com/Hyderabad/Course/amazon-web-services-training