Picking your compute model on the Google Cloud platform might look like a simple choice between the two services. However, choosing either option is much more nuanced than a “this or that” decision, and if you select the wrong infrastructure from the outset, you should expect months of rework, bill shock, and infrastructure that fails when your application reaches production and encounters user traffic, resulting in scaling issues.

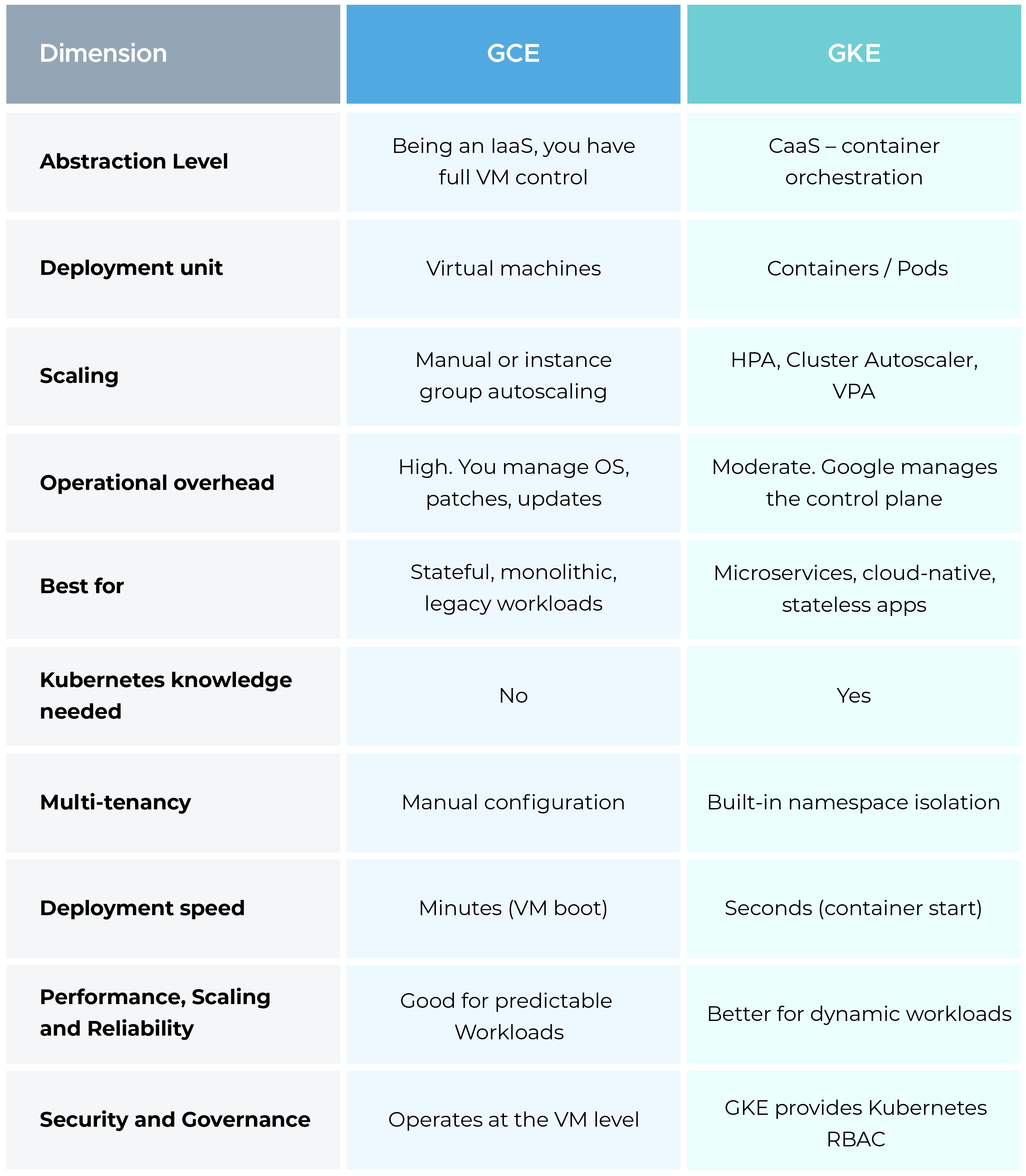

Google Compute Engine and Google Kubernetes Engine are both mature, industry-tested compute options available on GCP. Each is built for different use cases and workloads, carries different operational responsibilities, and behaves very differently at scale.

In this blog, we provide a detailed comparison between the two across multiple parameters and benchmarks such as cost, management overhead, scaling, security, and more, so you can choose the service that actually holds up as your workloads grow.

What Is Google Compute Engine (GCE)?

GCE is Google Cloud's IaaS offering. You provision virtual machines, pick your OS, configure CPU and memory, attach storage, and manage everything from there. It behaves like a traditional server, without the hardware rack and the datacenter lease.

When opting for Google Compute Engine, you have end-to-end ownership and control over the environment, which means installing only the services you need, choosing the OS you want, and maintaining lifecycle control at the machine level.

Key features:

- Linux and Windows Virtual Machines across predefined and custom machine types

- Per-second billing with automatic sustained-use discounts

- Committed Use Discounts/GCP Reserved Instances offer up to 57% for predictable workloads

- GCP instance types such as Preemptible and Spot VMs at 60-91% off for fault-tolerant batch jobs

- GPU and TPU support for ML training and inference

- Persistent disk storage that survives VM restarts

- Free tier: one e2-micro VM up to 720 hours/month in select regions

GCE handles web servers, APIs, data pipelines, CI/CD runners, lift-and-shift migrations, and any application that needs full OS-level control to run properly.

What Is Google Kubernetes Engine (GKE)?

GKE is Google Cloud’s managed Kubernetes platform. It handles the orchestration of containerized applications, including deployment, scaling, networking, storage, and health monitoring, across clusters of nodes. Google manages the control plane, while you define workloads and configure clusters, and Kubernetes takes care of scheduling and resource allocation.

GKE runs in two modes. Standard mode provides node-level control, whereas Autopilot fully manages the nodes for you, with billing based on pods instead of virtual machines.

Key features:

- Fully managed Kubernetes control plane with 99.95% SLA on regional clusters

- Cluster and node autoscaling tied to actual workload demand

- Vertical Pod Autoscaler for automated resource right-sizing

- Spot Pods (Autopilot) and Spot VMs (Standard) for significant cost reduction

- Native integration with Cloud Cost Monitoring, Logging, BigQuery, Pub/Sub, and Cloud Storage tools and services

- GKE Anthos for hybrid and multi-cloud cluster management

- Free tier: $74.40/month in credits per billing account, covering one zonal Standard or Autopilot cluster

GKE is the preferred choice for cloud-native architectures, microservices, stateless applications, data processing pipelines, and ML workloads that need dynamic scaling.

Through GCE, you get complete control of the virtual environment. Whereas Google Kubernetes Engine runs the application inside pods that are scheduled across a managed cluster with a higher degree of abstraction.

The difference, which might seem trivial at a broader level, becomes much more evident as it starts in the workflow and across engineering operations, and most importantly, in the bill spent.

That’s why GCP cost optimization should become a priority right from the start.

GCE gives more granular flexibility and rewards it. GKE trades some of that granularity for automated cluster management, self-healing deployments, and built-in orchestration that would take months to build manually on VMs.

At the scaling layer, Google Compute Engine provisions new VMs through managed instance groups based on custom metrics.

Google Kubernetes Engine scales at two levels simultaneously. Pods expand horizontally within existing nodes, then nodes are provisioned when pods can no longer be scheduled.

That layered response is faster and more granular when demand spikes suddenly.

Google Compute Engine vs Google Kubernetes Engine Cost Breakdown

GCE pricing:

- Billed per second based on machine type, vCPU, memory, region, and storage

- Sustained-use discounts kick in automatically when VMs run 25%+ of a month

- Committed Use Discounts: up to 57% off for 1-3 year commitments

- Preemptible/Spot VMs: 60-91% off standard pricing for interruptible workloads

- No base management fee. You pay for resources provisioned, nothing more

GKE pricing:

- Cluster management fee: $0.10/hour per cluster (~$72/month) for regional/multi-zonal clusters

- Free tier credit: $74.40/month covers one zonal Standard or Autopilot cluster

- Standard mode: pay for underlying GCE VMs (nodes), starting from $0.0449/hour

- Autopilot mode: billed per pod on CPU, memory, and storage requested—no VM management

- Persistent Disk: $0.04/GB/month (standard), $0.17/GB/month (SSD)

- Committed Use Discounts apply to compute inside GKE clusters

Where GCE wins on cost: Long-running, predictable workloads where sustained-use discounts compound month after month.

Applications needing custom machine configurations are unavailable in standard Kubernetes node pools. Teams running fewer, larger workloads that don't need container orchestration layered on top.

Where GKE wins on cost: Variable workloads with unpredictable traffic patterns.

Autopilot's pay-per-pod model means idle node capacity leads to a reduced spend. Applications running many small containers where bin-packing across nodes increases overall utilization.

Using preemptible VMs, GKE can impact nearly 70% cost savings over GCE for containerized workloads.

One nuance worth understanding on Autopilot: per-pod pricing runs roughly 191% higher than Standard node compute on paper. The savings surface through efficiency gains, if workloads consume less than 53.5% of a Standard node's CPU and 50% of memory, Autopilot eliminates idle node spend and comes out ahead.

Regardless of the choice, oversized nodes, idle resources, and unoptimized workloads pile up costs fast on both platforms. CloudKeeper's Kubernetes Management and Optimization service tackles GKE spending through rightsizing, spot protection, and ongoing governance across clusters.

Operational Overhead: What You're Actually Signing Up For

On GCE, you own the full stack. OS updates, security patches, monitoring agents, logging configurations, network rules, and instance lifecycle management become the responsibilities of your cloud team.

Engineers with strong Linux administration backgrounds handle this well. For teams without that depth, the management burden drains engineering time that could go toward shipping product.

On GKE, Google handles control plane management, version upgrades, and cluster repairs. Your team focuses on workload configurations, resource requests, namespace policies, and application deployments.

Kubernetes, being a challenging skill to master in itself, adds to the complexity because YAML-heavy configurations, pod scheduling behavior, container networking quirks, and persistent storage management all require real expertise to get right.

Performance, Scaling, and Reliability

GCE performs well for stable workloads where resource requirements are known and predictable. Vertical scaling requires VM downtime. Horizontal scaling through managed instance groups works, but VM boot times of 1-3 minutes mean demand spikes take a few minutes to absorb.

GKE handles dynamic workloads better. Pods start in seconds. The Cluster Autoscaler provisions new nodes automatically when existing capacity fills. Vertical Pod Autoscaler adjusts resource requests without manual work.

For applications where traffic spikes are common, such as consumer apps, SaaS platforms, and e-commerce platforms, GKE can often respond more efficiently than GCE because it supports built-in autoscaling at the pod level through the Horizontal Pod Autoscaler and at the node level through Cluster Autoscaler.

High availability on GCE requires deliberate configuration. Regional managed instance groups, health checks, and load balancers must be explicitly set up to achieve redundancy across zones.

GKE provides strong high-availability capabilities by default at the workload level through pod replication and automatic rescheduling when a node fails. Additionally, regional GKE clusters can distribute control plane and workloads across multiple zones, although multi-zone or regional configuration must still be explicitly enabled.

Security and Governance

GCE security operates at the VM level. Firewall rules, IAM security best practices, OS hardening, and network policies are supposed to be configured and maintained manually. Patching requires your team to apply updates, test compatibility, and schedule maintenance windows without disrupting running workloads.

GKE provides Kubernetes RBAC for fine-grained access control at namespace, resource, and operation levels. Workload Identity ties Kubernetes service accounts to Google Cloud IAM, removing the need to manage credentials inside containers entirely. Node auto-upgrade keeps underlying VMs patched without manual coordination.

Both support VPC Service Controls, Cloud Audit Logs, and Binary Authorization for supply chain security. GKE adds pod security policies, network policies between namespaces, and container image vulnerability scanning through Artifact Registry.

For teams operating under compliance requirements, GKE’s centralized governance, config management for policy enforcement, pod-level audit logging, and namespace-based isolation make demonstrating control to auditors considerably more straightforward.

CloudKeeper's FinOps consulting ties governance frameworks to cost optimization, keeping security posture and spending discipline working together.

When Google Compute Engine Makes More Sense

Legacy or monolithic applications that weren't built for containers. Containerizing a monolith typically requires significant refactoring. Running it on VMs first gets you into the cloud immediately, without the architectural overhaul.

Stateful workloads with complex storage requirements. Databases, file servers, and applications that tightly couple process state to the underlying OS run more naturally on VMs, where filesystem and storage configuration stay fully in your control.

Maximum infrastructure control. Specialized hardware configurations, specific kernel versions, custom networking setups, or OS-level performance tuning that Kubernetes simply can't accommodate.

Teams without Kubernetes experience who need to ship now without a steep ramp. GCE runs on skills already in the building.

When Google Kubernetes Engine Makes More Sense

Cloud-native microservices are independent services that need independent scaling, deployment, and lifecycle management. Kubernetes was built exactly for this pattern.

Frequent deployment cycles. Rolling updates, canary deployments, and blue-green strategies are native Kubernetes concepts that GKE automates without additional tooling.

Traffic that moves unpredictably. Consumer apps, SaaS platforms, and e-commerce workloads benefit from GKE's sub-minute scaling response in ways that managed instance groups on GCE can't match.

Teams already comfortable with Kubernetes who can operate the platform without a prolonged learning investment.

Common Mistakes Cloud Teams Make

Picking GKE for workloads that don't need it. A three-tier web app running fine on two GCE VMs doesn't justify Kubernetes overhead. Adding complexity without a corresponding benefit is just a waste.

Underestimating Kubernetes operations. Debugging pod scheduling failures, container networking problems, and persistent volume issues requires real depth. Teams that underestimate this often spend weeks troubleshooting problems that wouldn't exist at all on GCE.

Skipping resource requests in GKE. Containers without proper CPU and memory requests get scheduled onto nodes badly, leading to bloated clusters and wasted spend. Estimated wasted cloud spend hit 27% industry-wide in 2025 and is only projected to rise in 2026, and Kubernetes resource misconfiguration is a significant contributor.

Ignoring node pool rightsizing. Running uniform large node types across workloads with varying resource profiles leaves significant idle capacity on the table. CloudKeeper's cloud architecture optimization helps teams build node pool strategies that actually reflect their workload patterns.

Decision Framework: How to Choose Between GCE and GKE

In order to decide which service to choose, try answering these six questions first.

- Are your workloads containerized? No → start with GCE. Yes, or planning to containerize → GKE.

- Does your team know Kubernetes? No experience → GCE or invest in training first. Experienced team → GKE.

- How variable is your traffic? Predictable and steady → GCE with committed use discounts. Highly variable → GKE Autopilot.

- Do services need to scale independently? Yes → GKE. Single application → GCE.

- Are there compliance requirements for workload isolation? Complex multi-tenant governance → GKE. Straightforward → either.

- What's your timeline? Ship quickly with minimal ramp-up → GCE. Building toward cloud-native maturity → GKE.

Conclusion: Google Compute Engine or Google Kubernetes Engine?

To sum up in one line: Choose GCE for predictable, VM-based workloads requiring full control, and choose GKE for containerized, scalable applications that demand automation and rapid growth.

GCE suits teams running traditional workloads, needing full infrastructure control, or operating without Kubernetes depth. Reliable, flexible, and cost-effective for predictable compute on long-running VMs.

GKE suits teams running containerized applications, building out microservices, or needing fast autoscaling across workloads that don't stay predictable. The operational investment pays back through deployment velocity, scaling efficiency, and infrastructure automation that compound over time.

Many mature organizations run both GCE for legacy systems and databases, and GKE for modern application services. Starting on GCE and migrating containerizable workloads to GKE incrementally as expertise grows is a common and sensible path.

Whichever direction you go, cost control needs attention from day one. Both GCE and GKE generate sprawl through idle resources, oversized instances, and configurations that made sense initially but never got revisited.

CloudKeeper's platform suite delivers real-time visibility, automated rightsizing, and continuous governance across both compute models—so performance stays where it needs to be without the bill shock.

Very clear comparison between GCE and GKE. The point about choosing GCE for more control and simpler workloads, while using GKE for scalable containerized applications, makes the decision much easier for cloud teams.

https://www.sahneva.com

It’s the perfect balance of relaxing gameplay and fast-paced multitasking challenges. https://papas-games.io/